3 Best Website Scraping Tools That Save Money

Author : Jyothish

Author : Jyothish

AIMLEAP Automation Works Startups | Digital | Innovation | Transformation

Data is the lifeblood of decision-making and innovation in the digital age. Web scraping is the key to uncovering the immense treasure mine of data on the internet, whether you’re a corporation looking for market insights or a researcher looking for vital information.

Basically, it is a tool that has transformed the way we obtain information from websites. Web scraping, at its heart, is the automatic extraction of data from web pages. This procedure allows you to convert unstructured web data into a useable and relevant organized format.

Consider the potential to extract real-time pricing data from e-commerce sites, gather reviews and sentiments from social media, watch changes in weather forecasts, or collect a wide range of other data from across the web. This is the power of web scraping.

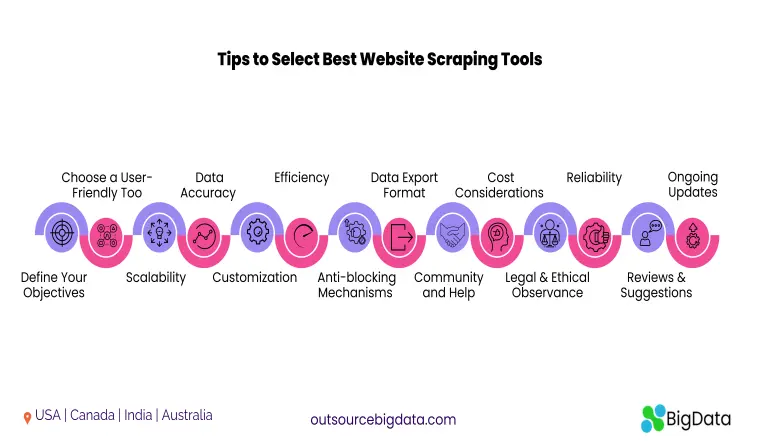

Tips to Select Best Website Scraping Tools

Data is the foundation upon which organizations, academics, and developers build their plans and ideas in the digital age. In this data-driven society, web scraping, or the practice of mechanically obtaining data from websites, is a valuable tool. Choosing the correct best website scraping tools, on the other hand, is critical to the success of your data extraction operations. Here are some important considerations and ideas to help you make the best decision for data scraping services and tools:

1. Define Your Objectives

Before you begin web scraping, specify the precise data you require and the goals of your project. This clarity will inform your scraping tool and strategy selection.

2. Choose a User-Friendly Tool

Look for a data scraping service that has simple user interfaces. You don’t have to be a code genius to use them. Many best website scraping tools provide point-and-click capability to make navigation easier.

3. Scalability

Consider the tool’s scalability. Is it capable of handling both small and large-scale scraping projects? Scalability is critical for ensuring the long-term viability of your data extraction activities.

4. Data Accuracy

Check if the tool can extract clear, structured data. Unorganized data can be difficult to work with and may necessitate additional cleaning and formatting.

5. Customization

Look for a data scraping service that lets you change the parameters of your scraping. When you need specialized data or want to prevent overloading a website’s server, customization is essential.

6. Efficiency and Speed

When working with real-time or time-sensitive data, data extraction speed is crucial. Select a tool that provides efficient scraping procedures.

7. Anti-blocking Mechanisms

Websites frequently implement safeguards to prevent or minimize web scraping. Make sure the technology you use can handle anti-scraping tactics like IP rotation and CAPTCHA solving.

8. Data Export Format

Examine the data export capabilities of the tool. It should let you store the extracted data in forms such as CSV, JSON, or databases for easy integration into your applications or analysis.

9. Community and Help

When you run into problems or have inquiries, a vibrant user community and responsive customer service can be extremely helpful.

10. Cost Considerations

Best website scraping tools are available in a variety of pricing structures. Some are free, while for others you need to subscribe. Consider your budget as well as the utility of the tool.

11. Legal and Ethical Observance

Check that your online scraping activities are in accordance with copyright and data protection rules. Some websites’ terms of service may forbid scraping.

12. Reliability

Select instruments that are well-known for their dependability and stability. Scraping projects can be hampered by frequent downtime or crashes.

13. Reviews and Suggestions

Read reviews and get recommendations from people who have used the best website scraping tools before. Their perspectives can be useful in making an informed decision.

14. Ongoing Updates

Scraping tools should be updated on a regular basis to accommodate changes in website structure and anti-scraping methods.

3 Best Website Scraping Tools That are Cost-Efficient

Choosing the best Web Scraping Tool that completely fulfils your business requirements can be a difficult challenge, especially with so many web scraping tools on the market. To simplify your search, here are three

1. APISCRAPY

Website scraping has never been more sophisticated. APISCRAPY employs cutting-edge artificial intelligence to extract data from websites with unrivalled accuracy. APISCRAPY eliminates the need for human data extraction by automating the entire process.

Why spend numerous hours integrating data when you can have everything at your fingertips? APISCRAPY is a ready-to-use Data API that provides structured data in the format of your choice. Whether you prefer JSON, CSV, or another format, our API ensures that your data is accessible in the most convenient manner.

Key Features

- This best website scraping tool is designed to transform web and app data into readily usable information.

- It leverages AI-augmented and pre-built automation features, eliminating the need for any setup or infrastructure costs, as well as coding.

- Additionally, it offers pre-built data classification capabilities, allowing users to access data in real-time or schedule it while providing intuitive dashboards for easy monitoring.

- It also comes with ready-built database integration features.

- Furthermore, this platform offers outcome-based pricing, ensuring you only pay for the results you achieve.

How is APISCRAPY Cost-Efficient?

Why pay for the procedure when you can pay for the outcome? APISCRAPY’s distinct “Pay for Outcome” pricing mechanism ensures that you only pay for successfully acquired data. No more worries about incomplete or inaccurate results – we guarantee the data you pay for is of the highest quality.

2. ParseHub

While there are numerous web scraper tools in the market, not many of them are offered for free. However, individuals seeking a no-cost tool for data collection should consider ParseHub, an easy-to-use web scraper.

Moreover, it is a robust scraping tool that enables you to effortlessly extract the data you need. Even when dealing with complex websites, this tool is adept at collecting data from JavaScript and AJAX webpages, and then storing that data for your use.

When using a Rest API, you can easily save or store your extracted data in Excel and JSON formats. However, it may lack some features compared to other tools. One of the disadvantages faced by users of this tool is that it has a steep learning curve, making it less user-friendly and sometimes lacking in good support assistance.

Key Features of ParseHub Tool

- A visual point-and-click interface that enables users to select the data they wish to extract without the need to write any code.

- Scrape data from websites that utilize JavaScript, AJAX, and other technologies.

- Handle CAPTCHAs and other anti-scraping measures.

- Schedule routine data updates and receive notifications when data alterations occur.

- Integration with a range of other tools and platforms, including Excel, Google Sheets, and Zapier.

PasrseHub is on our list of best website scraping tools but has few shortcomings such as:

- It is hard to troubleshoot for large projects.

- There is often a limit to output (it is unable to publish complete scraped output).

- If you ignore these disadvantages, ParseHub remains on the best website scraping tools list.

3. Scrapy

Scrapy is a web scraping library employed by Python developers to construct scalable web crawlers. Being a comprehensive web crawling framework, it addresses the complexities involved in building web crawlers. These include tasks like proxy middleware and query requests, among others.

This tool is intended to use by developers and data scientists working with Python. It offers a robust and adaptable API for constructing web scrapers and managing various features. These include proxy middleware, query requests, and more. Also, it represents a full-fledged web crawling solution tailored for developers seeking to create scalable web crawlers using Python.

Scrapy is a cross-platform tool that can be used on Linux, Windows, Mac, and more. It is known for its high-speed data scraping service capabilities. It equips you with all the essential tools required for effortless data extraction. Additionally, it offers flexibility in saving your extracted data in the format and structure of your choice.

Key Features of ParseHub Tool

- Offers a robust and extensible spider development API, allowing users to define data extraction and processing methods.

- Effectively handles anti-scraping measures like CAPTCHAs, making it ideal for scraping websites with these security measures.

- Supports modern web technologies like AJAX and JavaScript, which is crucial for extracting dynamic content.

- Built-in downloader that can handle HTTP requests and responses, manage cookies, and follow redirects, enhancing the scraping process.

- The Scrapyd service enables users to schedule and execute spiders in the background, facilitating automated and regular data extraction.

Scrapy too has a few shortcomings such as:

- The Scrapy community is active and supportive, but its documentation and support resources may be limited compared to other web scraping tools.

- Developing a crawler for JavaScript support in Scrapy can be time-consuming, requiring more expertise and effort than tools designed for JavaScript-heavy websites.

- Though there are shortcomings, Scrapy is a robust and versatile web scraping framework for Python developers who are willing to invest time and effort to effectively utilize its capabilities.

Conclusion

While ParseHub and Scrapy are both best website scraping tools, Outsource Bigdata distinguishes itself as the superior choice for businesses and individuals seeking to save costs while effectively extracting and utilizing web data. Its comprehensive feature set, user-friendly interface, and cost-efficient pricing model set it apart. Outsource Bigdata‘s ability to convert web data into actionable insights without requiring coding or significant infrastructure investment makes it the top choice for those in search of an intelligent and budget-friendly web scraping solution.

Get Notified !

Receive email each time we publish something new:

Related Articles

Top 10 Open Source Web Crawling Tools To Watch Out In 2024

Top 10 Open Source Web Crawling Tools To Watch Out In 2024Author : JyothishAIMLEAP Automation Works Startups | Digital | Innovation | TransformationTop 10 Open Source Web Crawling...

How AI-Augmented Catalogue Services Can Benefit Your e-Commerce Business?

How AI-Augmented Catalogue Services Can Benefit Your e-Commerce Business?Author : JyothishAIMLEAP Automation Works Startups | Digital | Innovation | TransformationHow AI-Augmented...

Top 10 Web Scraping Software You Should Explore

Top 10 Web Scraping Software You Should ExploreAuthor : JyothishAIMLEAP Automation Works Startups | Digital | Innovation | TransformationTop 10 Web Scraping Software You Should...